Articles

Adversarial Code Review with GitHub Models

AI agents write code. Some of it works. When you ask them to check their own work, they rationalize the bugs instead of catching them. So I built a second agent that watches the first.

AI agents write code. Some of it works. Some of it doesn’t. And when you ask them to check their own work? They rationalize the bugs instead of catching them.

Self-verification is a lie. The same model that made the mistake will defend it.

Proof Agent enforces a simple rule: the worker and the verifier are always separate. No agent verifies its own code. Ever.

This is adversarial code review — and it catches real bugs.

The Problem with Self-Verification

Here’s what happens when an AI agent reviews its own work:

Agent writes code:

function getUserById(id) {

return db.query("SELECT * FROM users WHERE id = " + id);

}You ask: “Is this safe?”

Agent responds: “Yes! This retrieves the user by ID. The query is simple and efficient.”

Reality: it’s vulnerable to SQL injection. An attacker can pass 1 OR 1=1 and dump the entire database.

The agent didn’t catch it because it wrote the code. It already rationalized the design choice. Asking it to verify is asking it to admit a mistake — and models are trained to sound confident, not to audit themselves.

How Adversarial Review Works

Proof Agent separates the worker from the verifier:

- Worker agent writes the code (GitHub Copilot, Claude, whatever)

- Verifier agent (separate LLM instance) runs an adversarial review

- Verifier checks for:

- Correctness — Does it do what was requested?

- Security — Vulnerabilities, exposed secrets, permission issues?

- Bugs — Edge cases, error handling, regressions?

- Facts — Are claims, version numbers, URLs verifiable?

The verifier must run commands to gather evidence. No PASS without proof.

Verdicts:

- PASS — All checks passed with evidence

- FAIL — Issues found. Specifics reported. Retry up to 3 times.

- PARTIAL — Some checks passed, others couldn’t be verified

Real Example: Catching SQL Injection

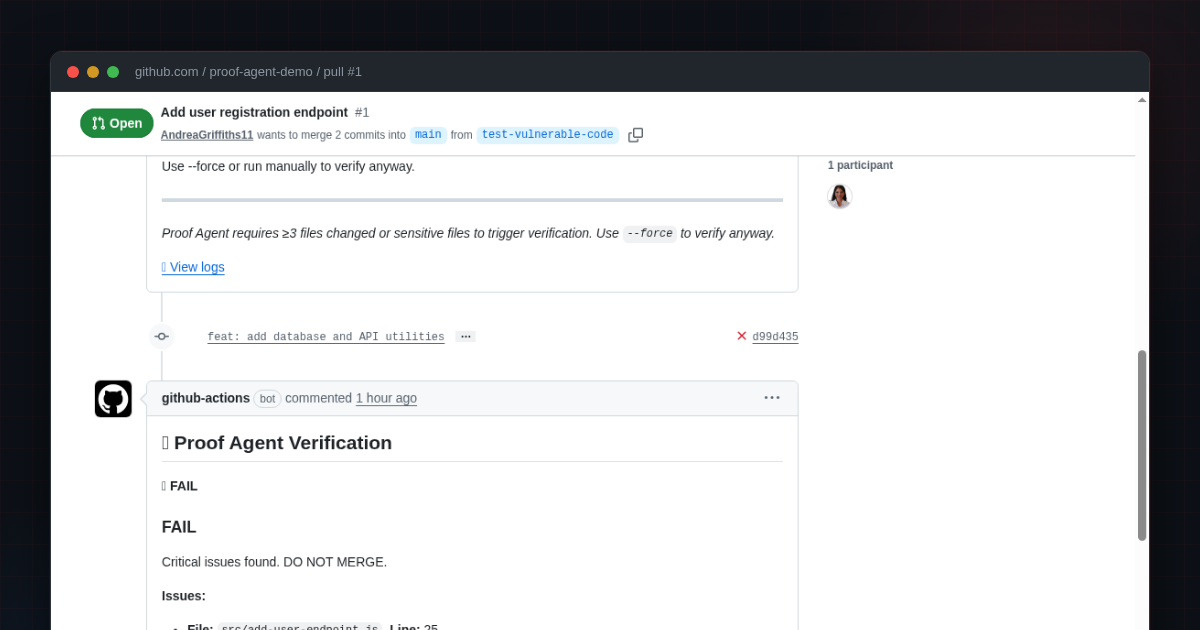

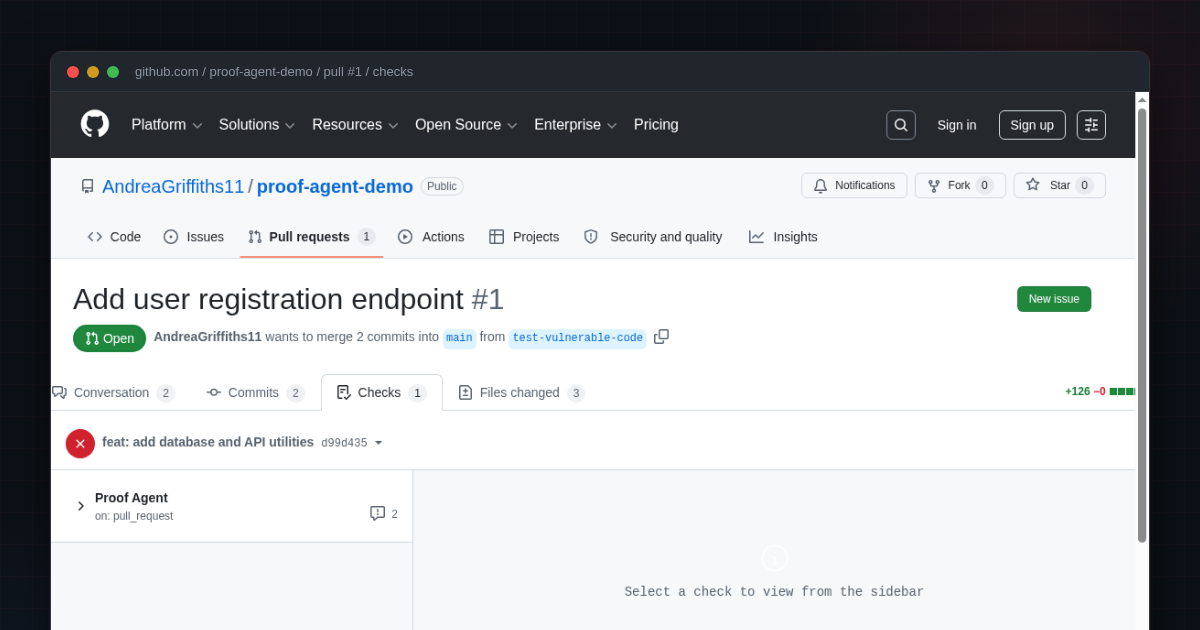

I tested Proof Agent with intentionally vulnerable code: proof-agent-demo/pull/1

The first commit added a single file. Proof Agent ran but returned SKIP — only 1 file changed, no sensitive patterns matched.

The second commit added two more files, bringing the total to 3. That crossed the threshold. Proof Agent ran a full adversarial review and returned FAIL.

Files in the PR:

add-user-endpoint.js— SQL injection, plain-text passwords, error leakagedatabase-utils.js— Hardcoded database password, multiple SQL injection vulnerabilitiesapi-client.js— Hardcoded API keys, disabled SSL verification

Here’s a subset of Proof Agent’s findings (the full output flagged additional issues in database-utils.js):

❌ FAIL

Critical issues found. DO NOT MERGE.

Issues:

• File: src/add-user-endpoint.js, Line: 25

Severity: CRITICAL

Issue: SQL injection vulnerability - user input (username, email)

directly used in an SQL statement without sanitization.

Code: const user = await queryBuilder.updateUser('new', username, email);

Fix: Use parameterized queries to prevent SQL injection.

• File: src/add-user-endpoint.js, Line: 37

Severity: CRITICAL

Issue: Plain-text password storage - password is stored without

any hashing or encryption.

Code: auth.registerUser(username, password);

Fix: Use a secure hashing algorithm (e.g., bcrypt) before storage.

• File: src/api-client.js, Line: 6

Severity: CRITICAL

Issue: Hardcoded API credentials - API key and secret key

exposed in source code.

Code: this.apiKey = 'sk-first-to-find-this-wins-a-mona';

Fix: Use environment variables or a secrets management system.

• File: src/api-client.js, Line: 24

Severity: CRITICAL

Issue: SSL verification disabled (rejectUnauthorized: false),

vulnerable to man-in-the-middle attacks.

Fix: Remove or set to true to enforce SSL verification.The workflow blocked the PR merge until these issues are fixed.

The worker agent didn’t catch any of this. The verifier did — because it was looking for problems, not defending the code.

How to Add It to Your Repo

Zero setup. No API keys. No external services.

Add this workflow to .github/workflows/proof-agent.yml:

name: Proof Agent

on:

pull_request:

types: [opened, synchronize, reopened]

permissions:

contents: read

pull-requests: write

models: read

jobs:

verify:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

with:

fetch-depth: 0

- uses: AndreaGriffiths11/proof-agent@v1.0.2

with:

github-token: ${{ secrets.GITHUB_TOKEN }}

base-ref: origin/main

block-on-fail: true

post-comment: trueThat’s it. Every PR now gets adversarial verification.

Uses GitHub Models API (free tier). The built-in GITHUB_TOKEN has models: read permission — no PAT needed.

When It Runs

Auto-verifies when:

- 3 or more non-excluded files changed

- Any file matches a sensitive pattern:

**/*auth*,**/*secret*,**/*permission*,**/Dockerfile,**/*.env*

Skips when:

- Fewer than 3 files changed and none match sensitive patterns

- Files matching

**/.gitignoreare excluded from the count

You can customize all thresholds, patterns, and retry behavior in proof-agent.yaml.

Why Adversarial Review Matters

AI agents are getting more autonomous. They write code, deploy infrastructure, and make architectural decisions. If they can’t be trusted to verify their own work, we need a second agent watching.

Proof Agent is the first step toward multi-agent safety:

- One agent proposes

- Another agent verifies

- Neither trusts the other

This is how we’ll ship AI-generated code to production — with confidence, not blind trust.

Try It

Install it. Break something. See if it catches you.

About the Author: Andrea Griffiths is a Senior Developer Advocate at GitHub, where she helps engineering teams adopt and scale developer technologies. She's passionate about making technical concepts accessible—to both humans and AI agents. Connect with her on LinkedIn, GitHub, or Twitter/X. · Read in Spanish · 阅读中文版